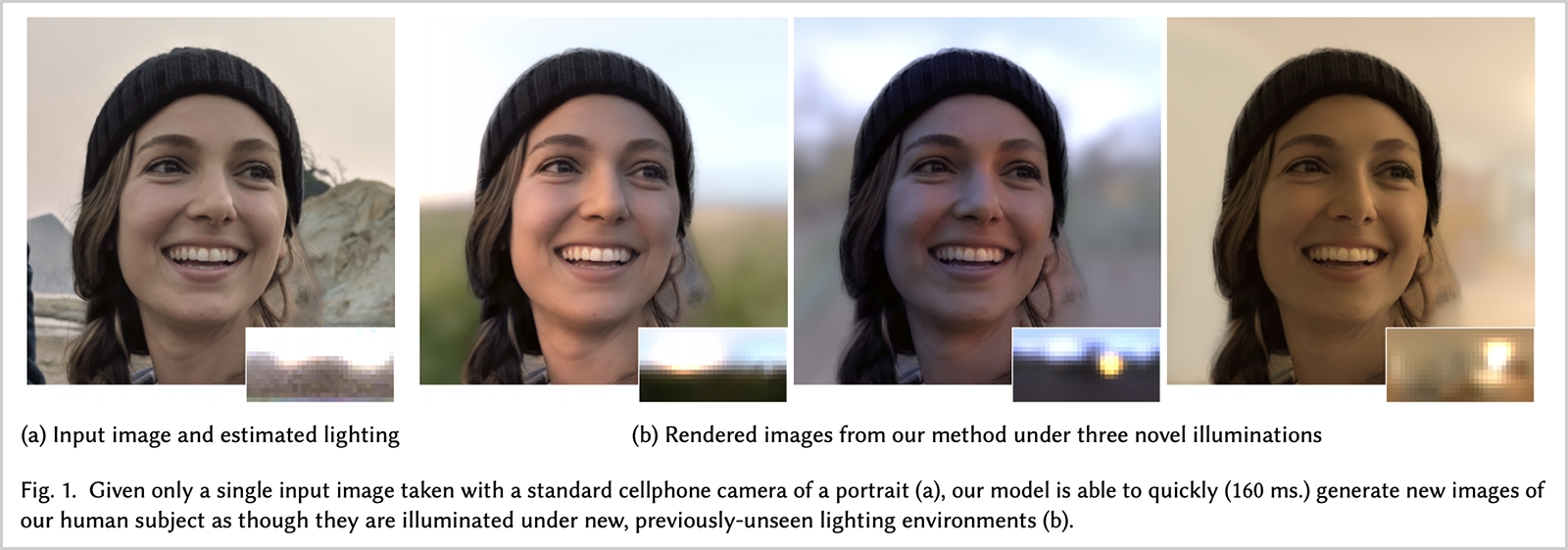

A group of researchers from the University of California San Diego and Google have found a way to relight photos “according to any provided environment map.”

The researchers have trained a neural network to take any photo and adjust its lighting at will – including direction, temperature, and quality of light.

With this newly developed model, you can relight your portraits using various canonical or user-specified environments similar to the popular “filters” used by social media platforms like Instagram.

The neural network was trained by taking photos of 18 different individuals from 7 different angles and under 304 different lighting angles in a studio. Each person was captured from several different angles at the same time as “a densely sampled sphere of lights” illuminated them from every angle.

By training on real data, the researchers were able to preserve the subtleties of a human facial appearance, such as shading, scattering, specularities, and shadowing.

Based on this data, the researchers were then able to input standard smartphone photos and dynamically “relight” them as if they were captured in a variety of different environments.

It kind of works similar to the portrait mode in Apple’s iPhone and Google’s Pixel phones. The main difference is that this feature doesn’t use a depth map or any other data beyond a basic RGB image, and it produces much better results.

What’s more intriguing is that the researchers claim that this technique “can produce a 640 x 640 image in just under 160 milliseconds.”

It is impressive how far the algorithm can get based on just 2D image data, without the need for a 3D model. Sure the results aren’t perfect, but it’s getting there and it’s pretty good at it already.