It’s clear that Facebook has an authenticity problem as far as news is concerned. This issue threw the social network directly into the limelight after the 2016 US Presidential elections. Exacerbating it was the fact that a lot of people use it as a news source, making authenticity and verify-ability all the more important.

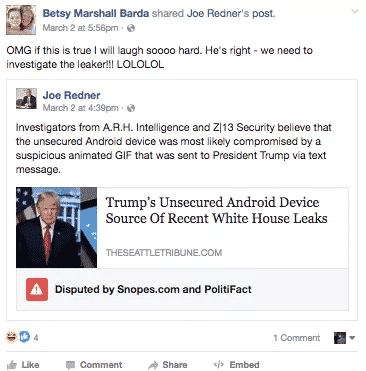

It is a relief then that the company has finally started to flag news stories which are perceived as fake, a promise it had made back in December. Potential violators will be accompanied by a small warning banner, as reported by Engadget and Gizmodo.

Flagged Fake News

The process is still far from time-efficient meaning the benefits ultimately could be minimal. As Gizmodo reported, a fake news story concerning Trump’s hacked smartphone was only removed after five days, meaning in most cases attention was already garnered.

The process starts by flagging from the hands of users or Facebook’s custom software, after which it has to be verified for authenticity through third-party websites such as Politifact. If two or more of them flag it again, it will be accompanied by a warning.

Of course, that still doesn’t mean Facebook specifically disowns the report or its claims, instead settling for “disputed”. The point is, whatever the label, it will be difficult to know whether it will have any impact on the acceptability of the content or the rate with which it is being published.

Also, it is currently only flagging political news, and that too in the US. Still, it is one of the biggest measures taken against the rise of conspiracy theorists on media who helped pave the way for populist movements that dominated 2016.

good decision by facebook

Only based for Europe, America, Etc :

Not For Asia Specially for PAKISTAN :

Jaha Koi Na Koi Fake News Deta He Rehta Hai !

People should be able to post anything as long as it is not against community guidelines. As far as fake goes, the “real” news is pretty strange and should also be flagged. This is funny and sad.

Yes, Specially in Pak, the real newses are fake. LOL