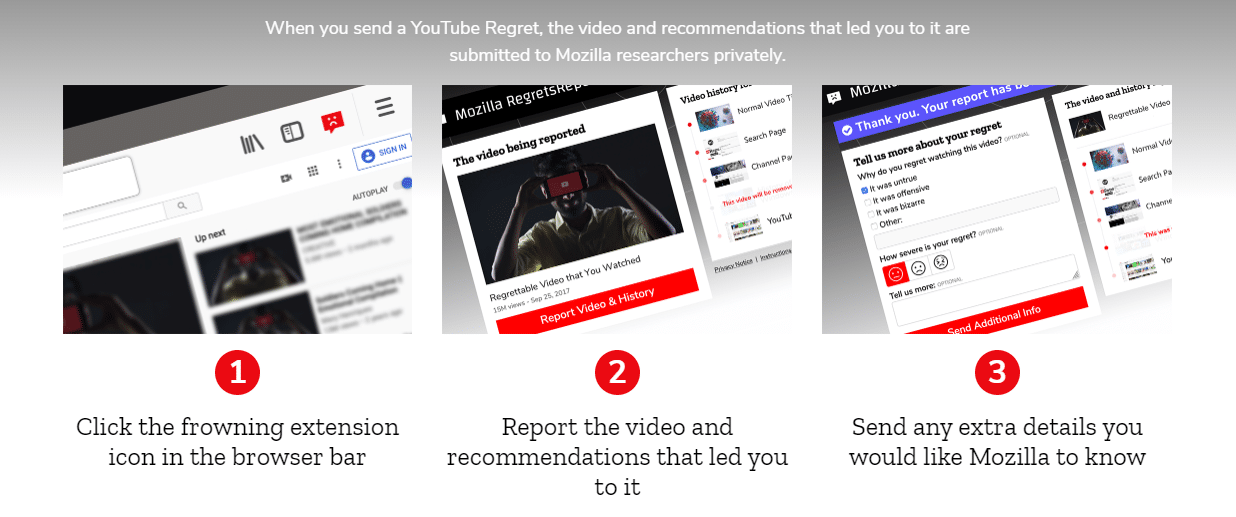

YouTube’s recommendation algorithm is notorious for suggesting weird and completely unrelated videos to what you usually watch. To help people better understand how the YouTube recommendations work, Mozilla is introducing a new browser extension dubbed RegretsReporter. The extension has been designed to crowdsource research about the regrettable recommendations users get.

The Firefox-maker has been planning the extension for a while now and is soliciting help from YouTube users to find problematic patterns. According to the data they made public, one user searched for videos about Vikings and was recommended content about white supremacy. At the same time, another searched for “fail” videos and started getting recommendations for grisly videos of fatal car wrecks.

Ashley Boyd, Mozilla’s vice president of advocacy and engagement, said,

There hasn’t really been a large-scale, independent effort to track YouTube’s recommendation algorithm to understand how it determines which videos to recommend. So much attention goes to Facebook and deservedly so when it comes to misinformation.

YouTube is also all in and is interested to see the results of the research. A YouTube spokesperson said,

However, it’s hard to draw broad conclusions from anecdotal examples and we update our recommendations systems on an ongoing basis, to improve the experience for users. Over the past year, YouTube has launched over 30 different changes to reduce recommendations of borderline content.

From the looks of it, the research will bring a couple of interesting aspects of the algorithms to light since the Google-owned video platform has promised, on numerous occasions, to tweak the algorithm and even the company’s executives are aware of how poorly the recommendations algorithm works.

ALSO READ

Google Pixel 5 Breaks Cover on September 30