Motion and gesture-based technology has been attempted in the past in various way. Microsoft tried its hand with the Kinect and motion-driven games in the game console domain, while Leap Motion made it possible to navigate the Windows operating system via motion controls.

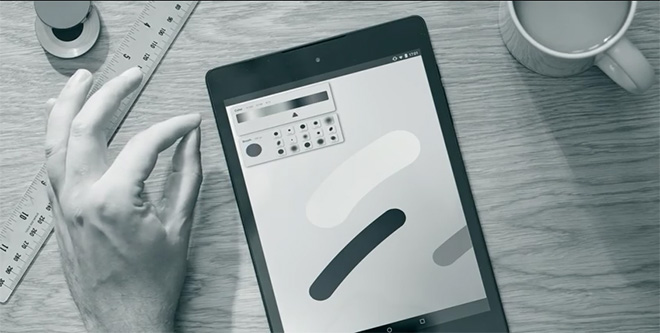

Google’s ATAP Project Soli is different, however, as the technology attempts to channel the natural movement of your fingers and hands, accurately detecting even the tiniest motions in order to make it quicker and easier to interact with and operate small form factor devices with even smaller displays, such as smartwatches.

The technology makes use of a radar in order to measure velocity, movement and distance, and can be tweaked to alter its input based on that distance. With Project Soli, the team at ATAP sought to emulate hand motions to perform some of the gestures that are commonly used for mobile devices. They also figured out how to track tiny variations in the received signal in order to perform the positional tracking of individual fingers.

The radar device is capable of functioning through surfaces and at a decent amount of distance from the device. Developers will be able to gain access to the translated signal data via a specific API, allowing them to interface with various stages of the gesture recognition pipeline.

While the team at ATAP is still working to finalize its technology, the radar hardware has already undergone significant iterative refinements that have shrunk it down from the size of a set-top-box to around the size of an SD card, making it all the more feasible for portable applications. The creators have also managed to achieve scale production within 10 months of development time.

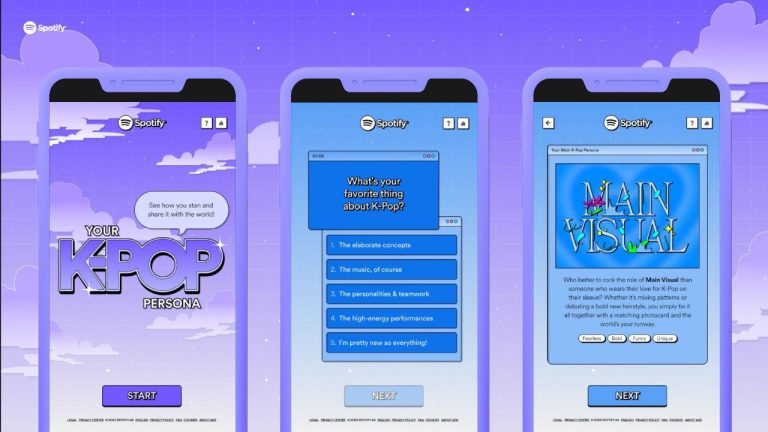

Soli is tentatively set for release later this year. It could prove to be a game changer, especially when paired with wearable devices such as Android Wear. Further information on the technology will be made available to developers later this year.