Most social networks these days, including Facebook, use facial recognition on your photos whenever you make an upload to gather data on you. In addition to social networks, the web is also full of similar software that is able to automatically recognize a person and figure out other things such as ethnicity, gender, and the environment.

As privacy concerns have grown recently, University of Toronto researchers have developed an AI that counters facial recognition software in a subtle way, by making minute adjustments to your photos. The research, led by professor Parham Aarabi and Avishek Bose (an MASc candidate), has successfully disrupted common facial recognition systems.

Personal privacy is a real issue as facial recognition becomes better and better. This is one way in which beneficial anti-facial-recognition systems can combat that ability.

ALSO READ

Qualcomm Unveils 10nm Snapdragon 710 Chip With AR & AI Features

AI vs AI

In order to strengthen their solution, the team used an AI technique known as adversarial training in which two opposing neural networks consistently learn from each other. So in their case, they developed two AIs: one that recognizes faces, and a second one that disrupts it by constantly training itself to counter the first one.

This way, both AI’s keep learning from each other and keep expanding on ways to prevent facial recognition. Avishek Bose says,

ALSO READ

Govt Allocates Rs 1.1 Billion for Artificial Intelligence Projects in 6 Universities

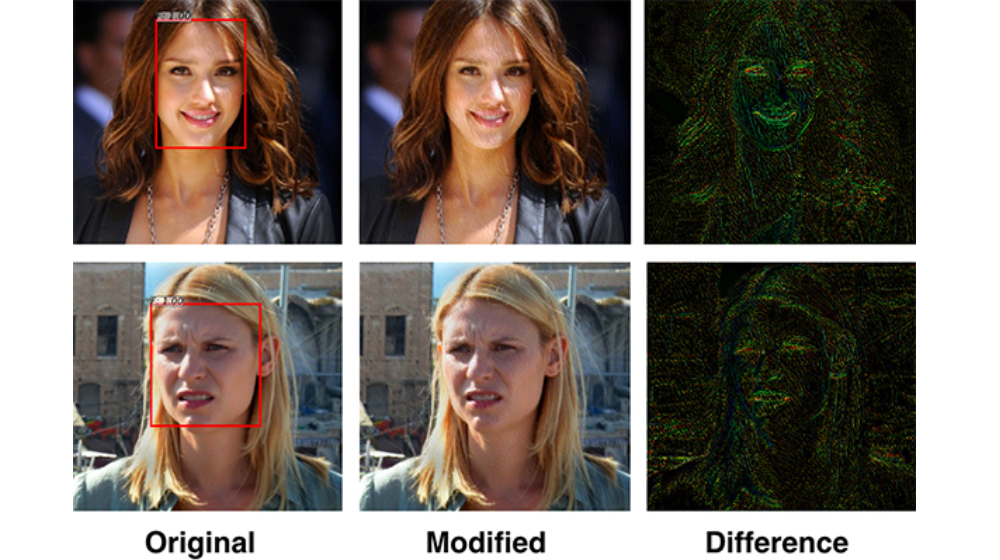

The disruptive AI can ‘attack’ what the neural net for the face detection is looking for. If the detection AI is looking for the corner of the eyes, for example, it adjusts the corner of the eyes so they’re less noticeable. It creates very subtle disturbances in the photo, but to the detector they’re significant enough to fool the system.

We can expect it work like a filter or a third-party plug-in that slightly modifies your image for you before you upload it to a social network. The researchers tested it out using a library of 600 different faces that were originally detectable, the new solution managed to reduce facial detection accuracy from 100 to 0.5 percent.

Via UoT