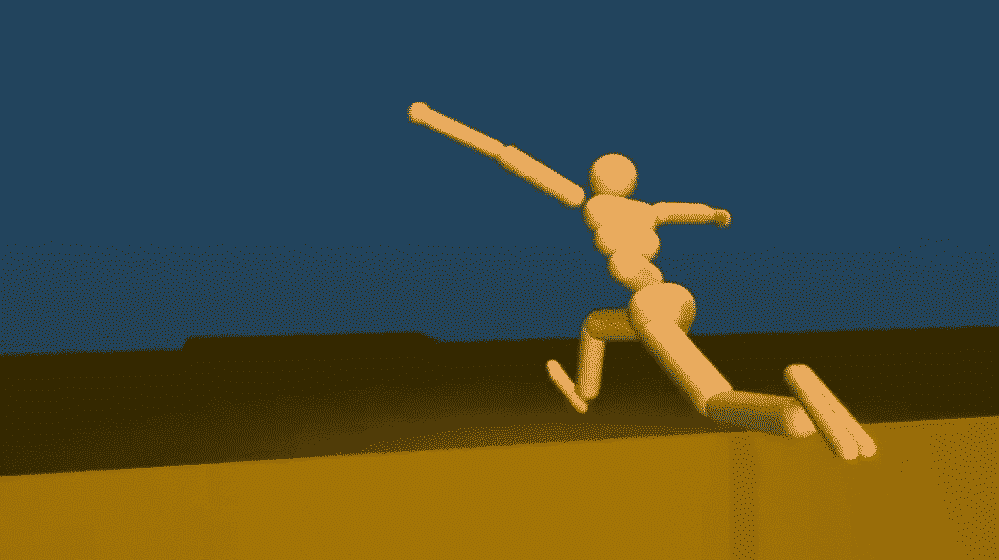

Google’s subsidiary, DeepMind, recently released a research paper showing how its ‘Reinforced learning’ technique has taught its program to walk on its own.

Reinforced Learning

Reinforced learning is quite similar to how a reward system works. Whenever the required results are obtained the program is incentivised with ‘rewards’. On the other hand, if it fails to do so, no reward is given. It’s a simple yet effective technique used for programming artificial intelligence (AI).

The technique shows how it can help a computer program navigate through unfamiliar and complex environments.

Don’t believe a program can actually teach itself? Watch the video below:

All the actions you see in the video performed by the (let’s call it) ‘Stick Man’ were self taught. What this means is that the software was in no way programmed or told how to walk. It did all that on its own by trial and error. Much like a baby does when it’s learning to crawl or walk.

The computer was only given a set of virtual sensors to identify objects around it and a simple command to move from point A to B.

All the maneuvers seen in the video were a result of the program trying to learn how to achieve its goal and hence get ‘rewarded’.

You may find it funny how the ‘Stick Man’ appears to be flailing its arms around in the air, but it has actually learned to use this action as a gyroscope to stabilize itself. Since its aim is not to look good but to run fast, it’s quite remarkable actually.

Usually reinforced learning is considered to be a basic technique and is thought not to be able to cope with complex situations such as running over a wall. But it’s pretty clear that the program actually learned to bend the knees to create a pivoting point and use that to vault over the obstacle.

Future Prospects

We truly are living in the future. This technique has great promise and may very well make programming of robots radically faster as they would be able to learn as they perform basic tasks.

It would be pretty interesting to put the constraint of energy on the program to see how it adapts to it. Maybe it will find more efficient ways of moving from A to B.

Thanks for Articles :

Now Ab Main Theek Se Chal Sakta ho !:p

haha, good one!

Gooooshh I love his hands action! :’D