After introducing fact-checking tools for posted articles, Facebook is taking preventative measures against fake news to a new level. From now on, the service is introducing tools that’ll verify photos and videos to further prevent harmful content from spreading.

The news shows an increase in sophistication of Facebook’s techniques. The feature was previously tested in France, but now it is useful enough for broader roll out. What this does is that it cross checks images, audio or videos with other news sources and articles on the web to verify their authenticity.

As always, Facebook has partnered with 27 third-party networks such as AFP to make sure the “verified” label comes from a reputable news source with more partners joining in the future. Fact-checkers can themselves flag content which they think is false.

Machine Learning

To aid itself in identifying such content, the network has employed machine learning, which looks for certain signals associated with harmful content. The identified content is then sent to the 27 partners.

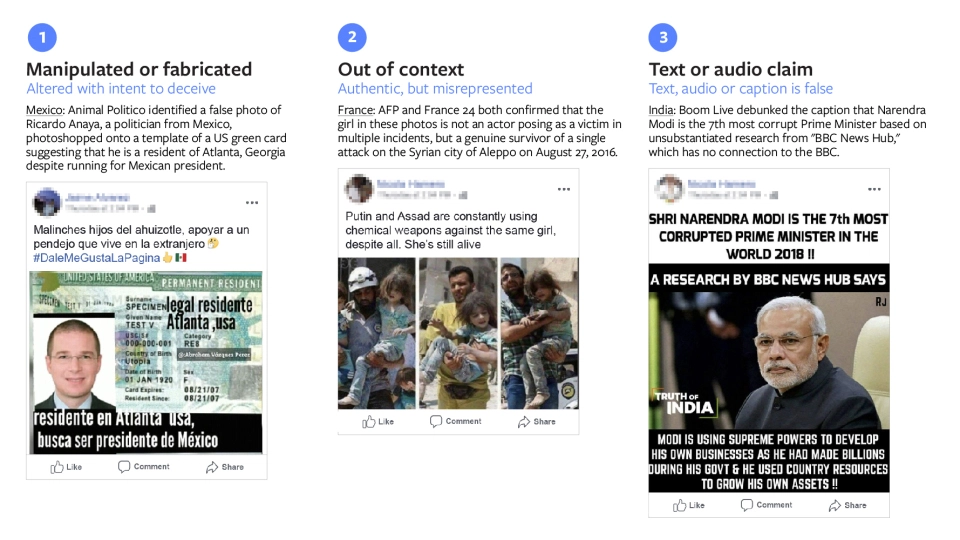

Fact checkers usually check for authentication using techniques such as reverse image search and image metadata analysis. Content which potentially contains harmful themes is then tagged with a disclaimer. Here’s how it works:

The fact-checking service will be available in 17 countries, with the photo and video verification feature available in each market.

Facebook is already losing relevance when it comes to being viewed as a credible news source. It originally started flagging content for fake news early last year, with the issue blown into the limelight after the vitriolic 2016 US elections. It still remains the center point of discord for the social network’s detractors.

Facebook Ko Chahyeh

Media Wale Jo Pages Banate hai Wahi Pages BlueTick Kare Aur Unhi K Post Ko Verify Kare Bus

Aur User / Other Pages Ko Sirf Share K Elawa News Post Ki Ejazat Na Di Jaye

Na K Her PAGES Ko Check Kare