Social media giant Twitter has an issue where tweet previews might be racially biased towards white people’s faces. The social network’s neural network is apparently choosing to show white people’s faces more frequently than darker faces.

ALSO READ

Twitter Pays Tribute to #QuaideAzam on His 72nd Death Anniversary

The problem was first reported on the weekend when several Twitter uses posted examples of photos showing a black person and a white person. Twitter’s timeline feed chose to display white people more frequently than black people. The company said that it is currently looking into the matter.

Trying a horrible experiment…

Which will the Twitter algorithm pick: Mitch McConnell or Barack Obama? pic.twitter.com/bR1GRyCkia

— Tony "Abolish ICE" Arcieri 🦀🌹 (@bascule) September 19, 2020

Twitter finally noticed after numerous people tested the problem and spoke about the matter. A member of Twitter’s communication team Liz Kelly said:

Our team did test for bias before shipping the model and did not find evidence of racial or gender bias in our testing. But it’s clear from these examples that we’ve got more analysis to do. We’re looking into this and will continue to share what we learn and what actions we take.

People later discovered that the algorithm prefers animated white characters as well.

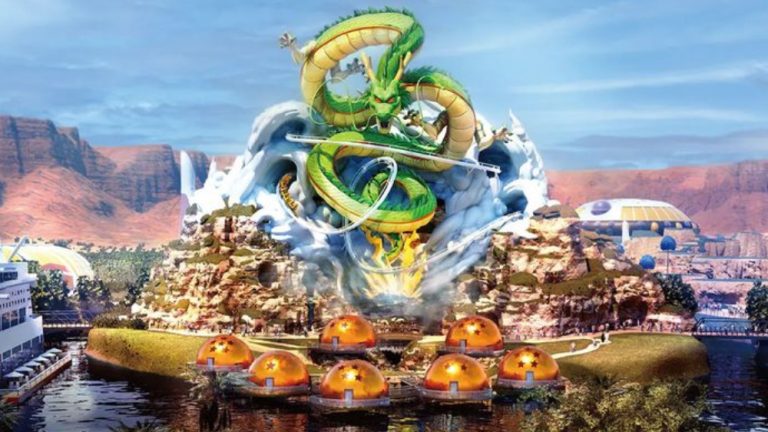

https://twitter.com/_jsimonovski/status/1307542747197239296?ref_src=twsrc%5Etfw%7Ctwcamp%5Etweetembed%7Ctwterm%5E1307542747197239296%7Ctwgr%5Eshare_3&ref_url=https%3A%2F%2Fmashable.com%2Farticle%2Ftwitter-photo-preview-algorithmic-racial-bias%2F

Twitter has promised to investigate and resolve the issue, but if this gets out of hand, it could turn out to be a major PR nightmare for the social media website. We will update this space as soon as we hear more about it.